Using CLIP to Classify Images without any Labels | by Cameron R. Wolfe, Ph.D. | Towards Data Science

OpenAI's CLIP Explained and Implementation | Contrastive Learning | Self-Supervised Learning - YouTube

Process diagram of the CLIP model for our task. This figure is created... | Download Scientific Diagram

Collaborative Learning in Practice (CLiP) in a London maternity ward-a qualitative pilot study - ScienceDirect

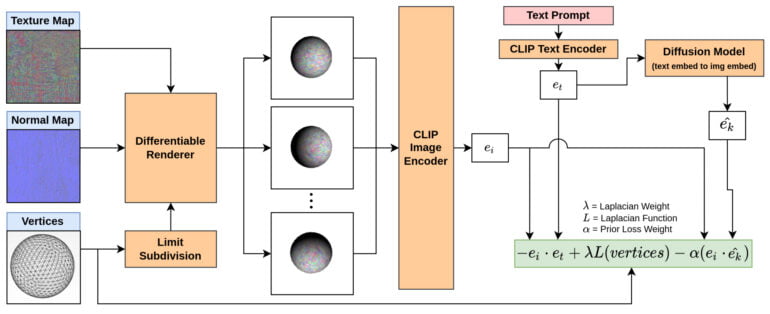

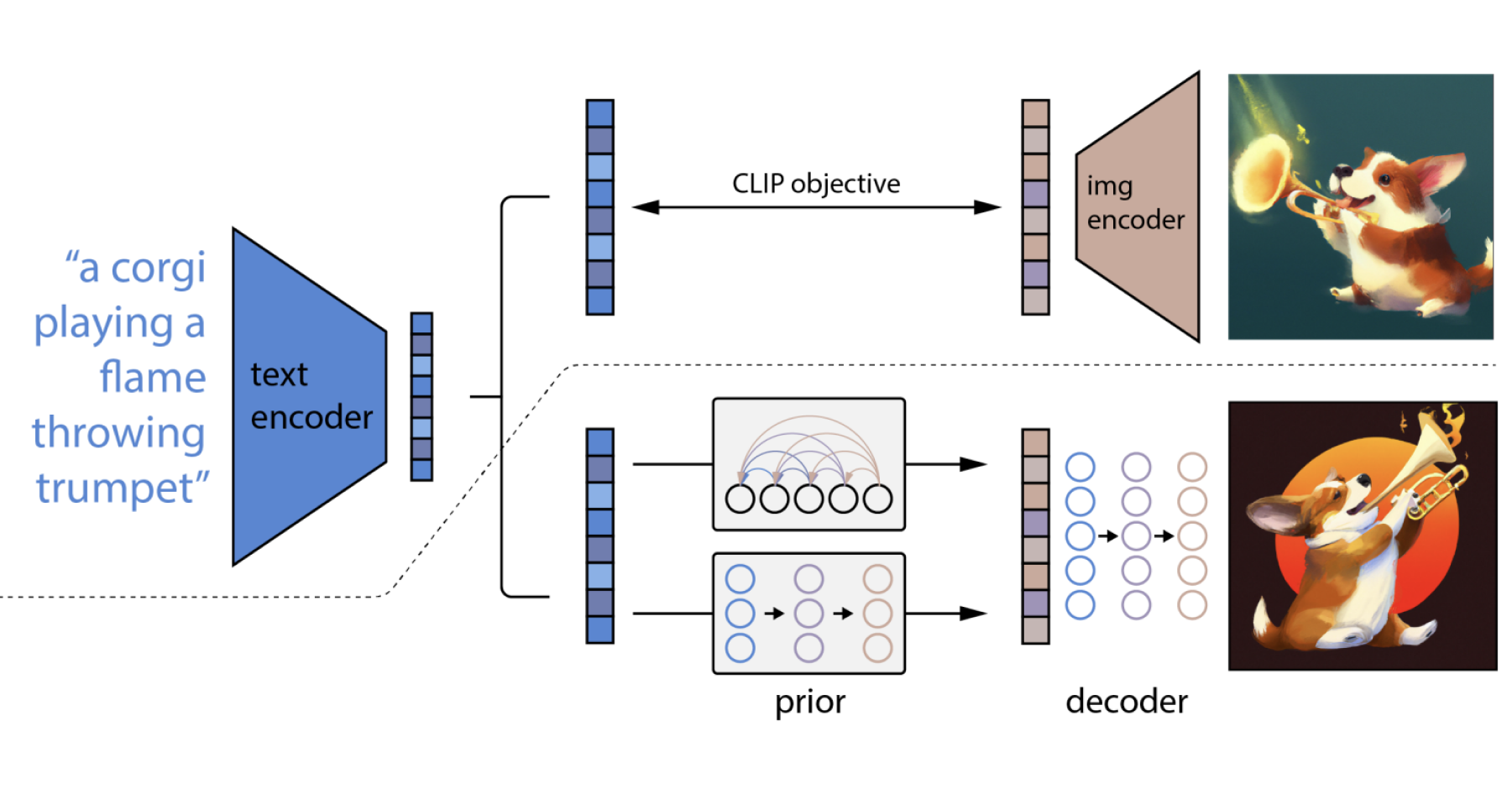

OpenAI's unCLIP Text-to-Image System Leverages Contrastive and Diffusion Models to Achieve SOTA Performance | Synced

From DALL·E to Stable Diffusion: How Do Text-to-Image Generation Models Work? - Edge AI and Vision Alliance

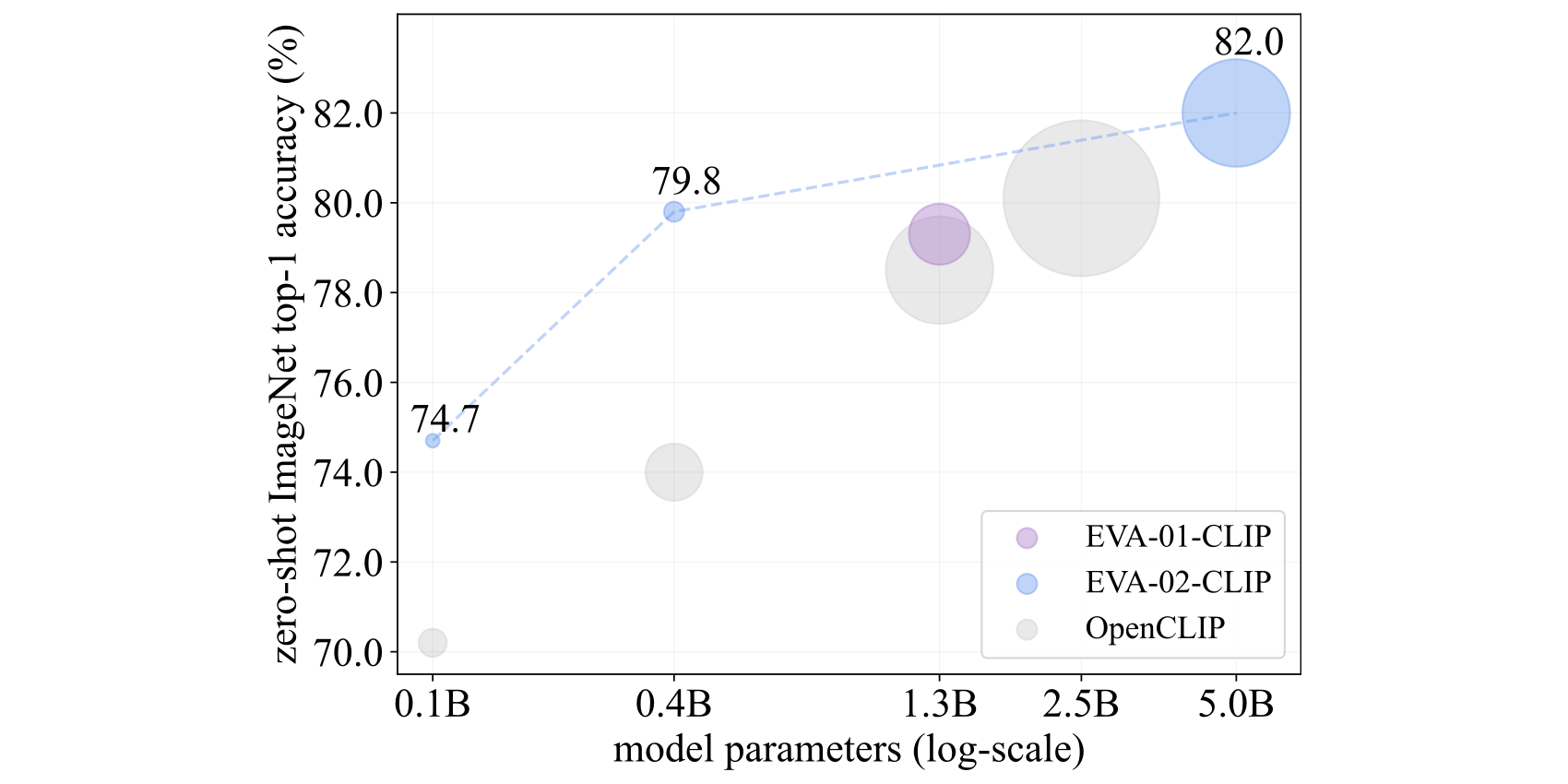

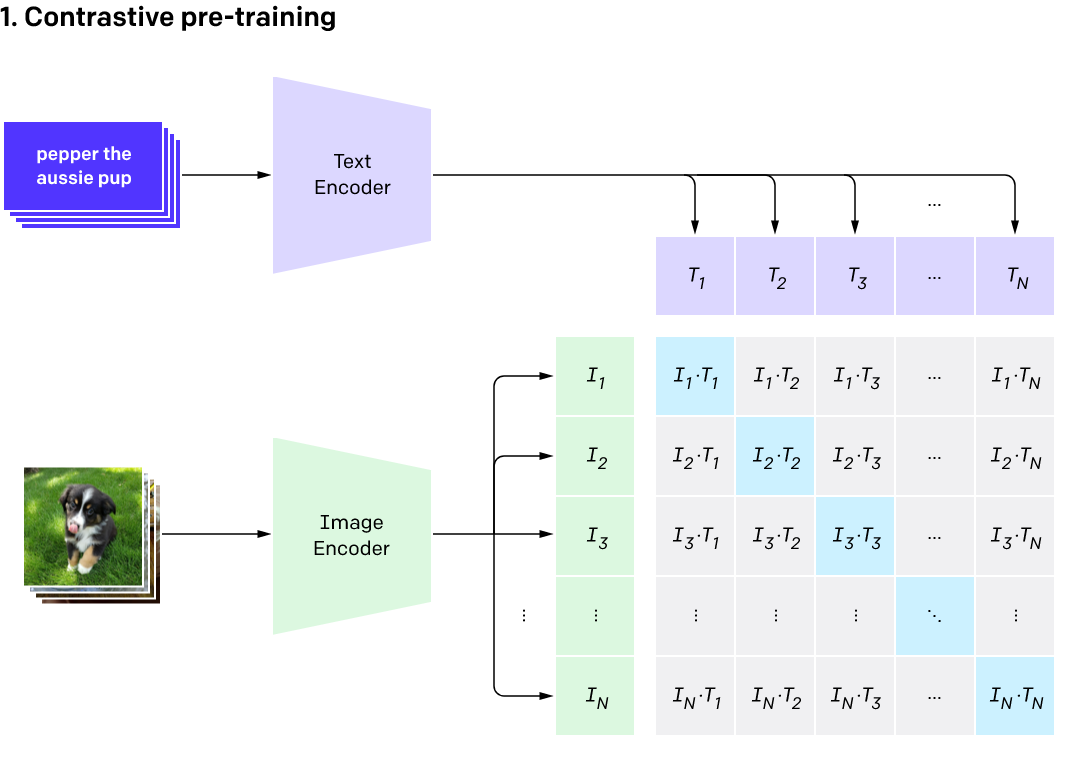

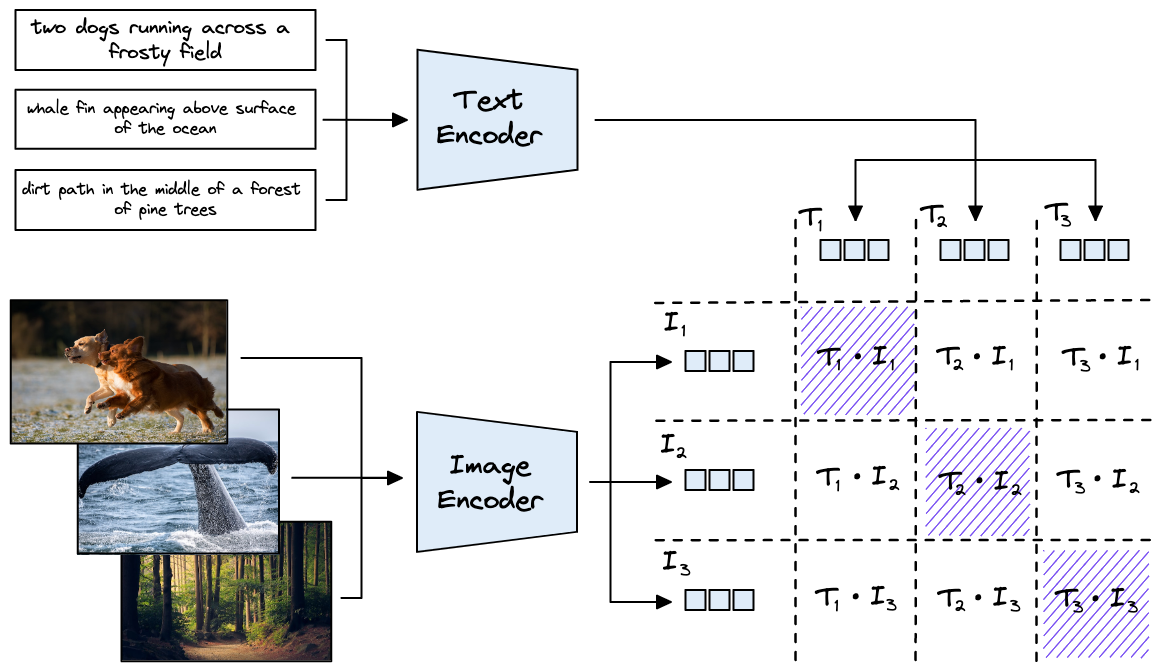

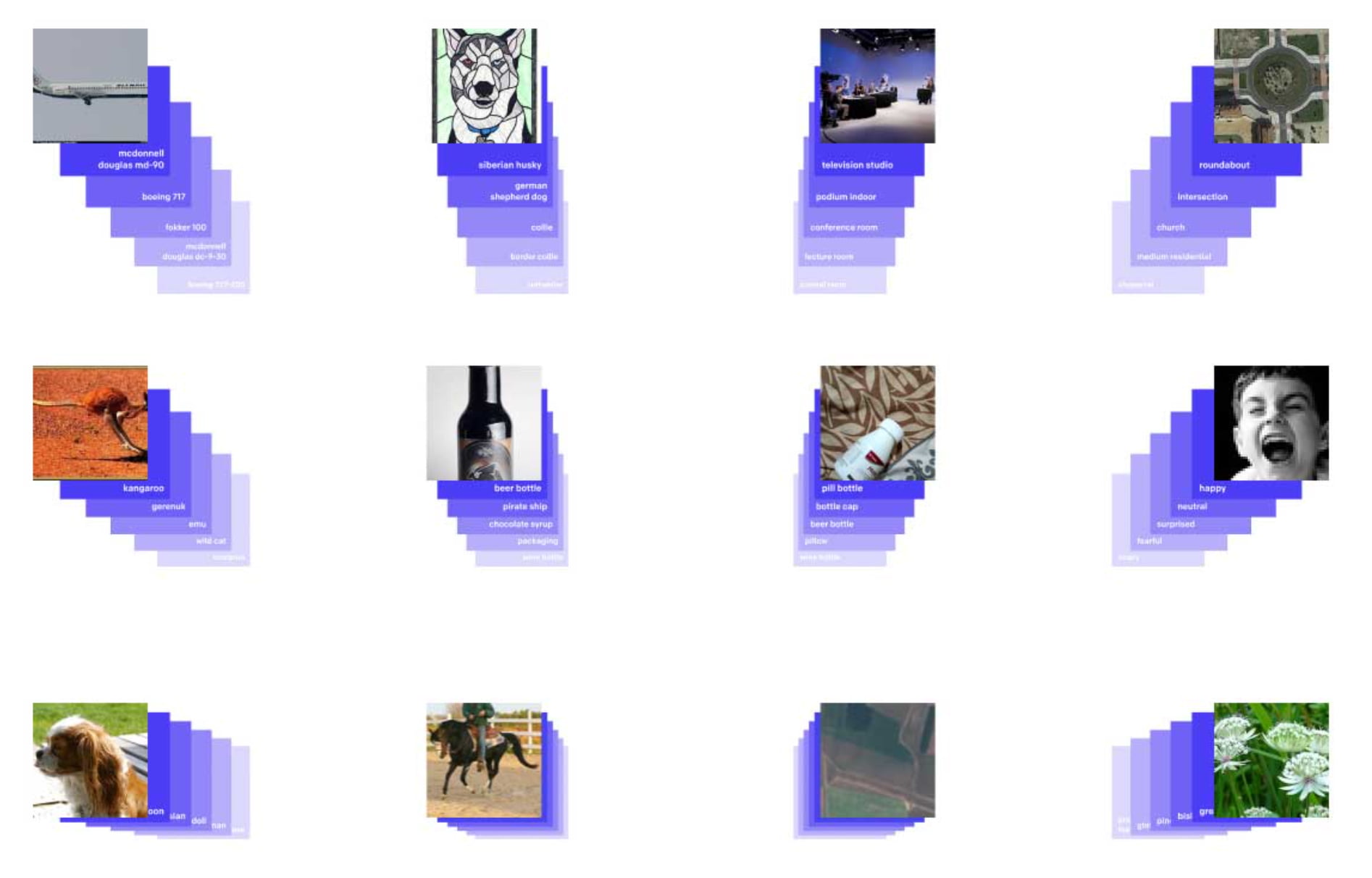

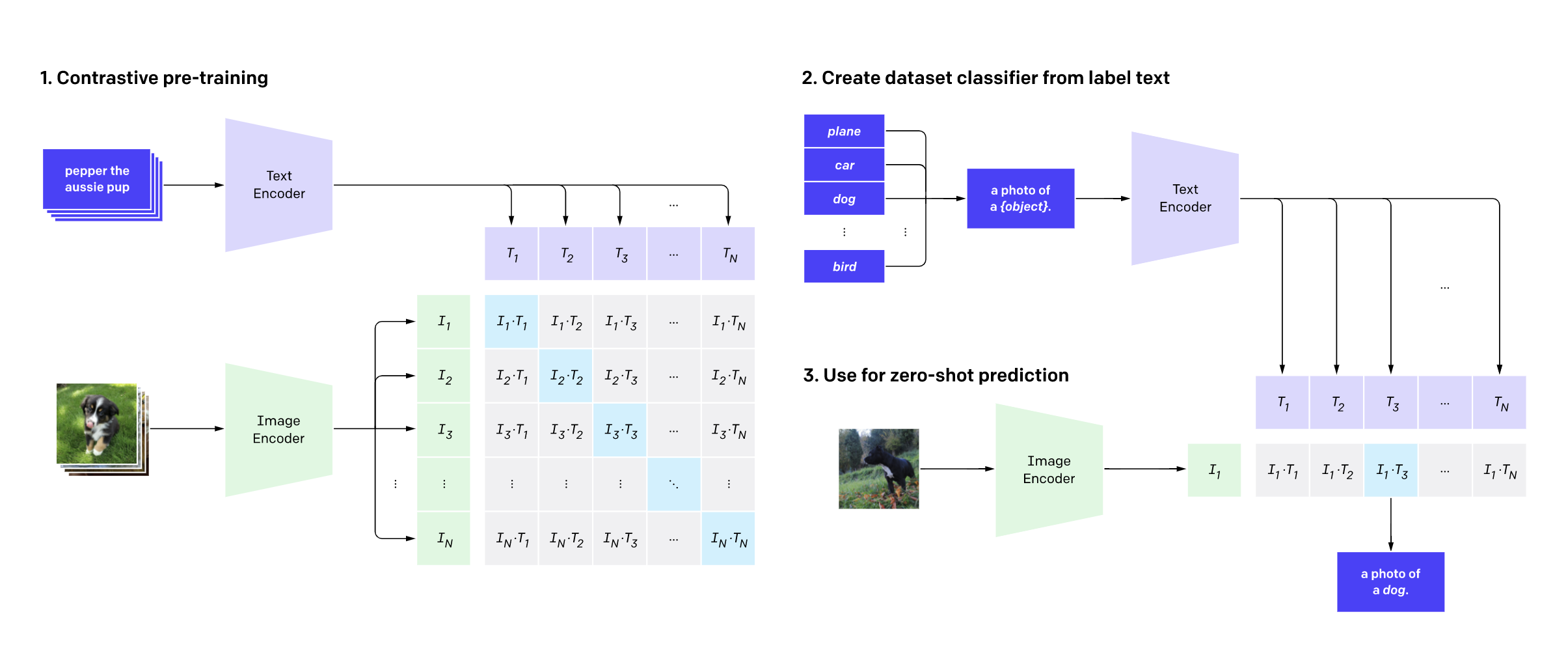

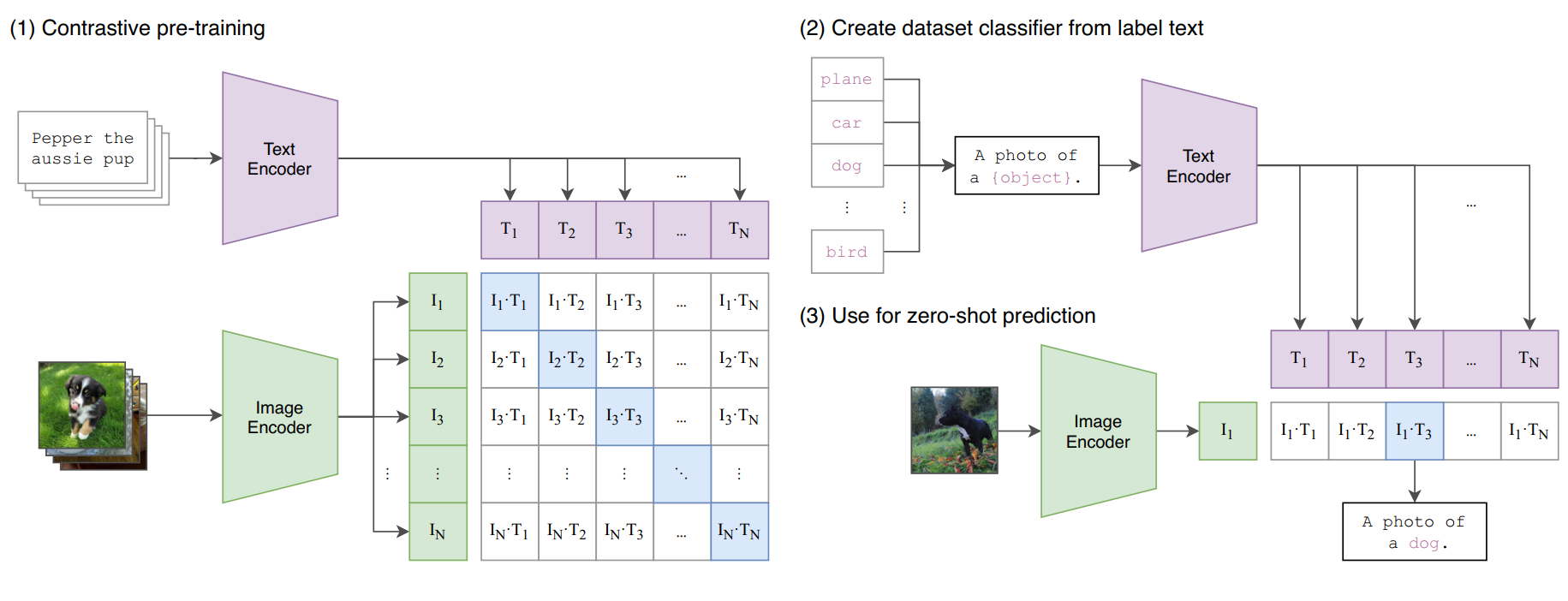

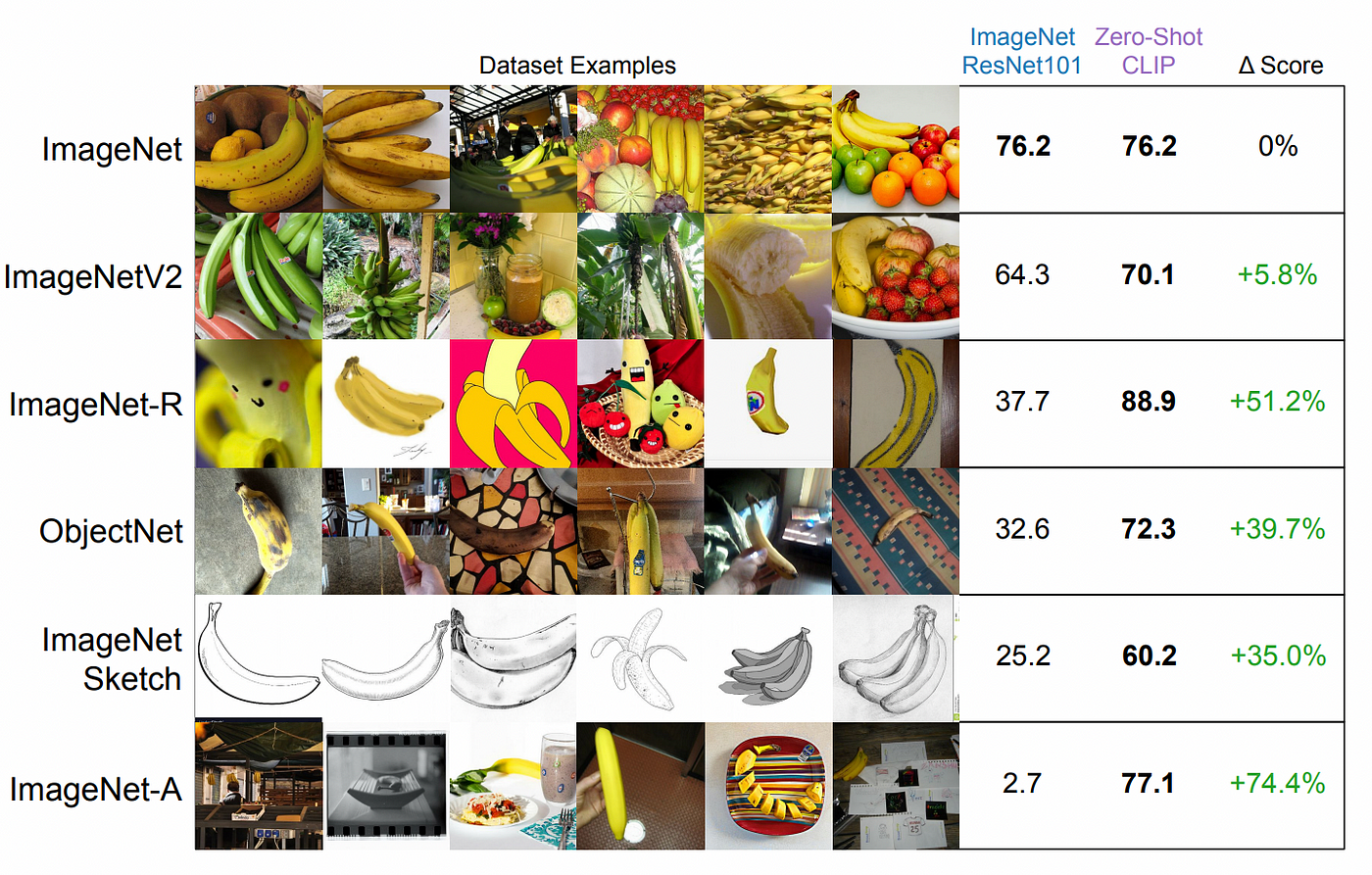

CLIP: The Most Influential AI Model From OpenAI — And How To Use It | by Nikos Kafritsas | Towards Data Science

Implement unified text and image search with a CLIP model using Amazon SageMaker and Amazon OpenSearch Service | AWS Machine Learning Blog